![]() When working with AWS API the clients (programs, scripts, etc) must have a way to prove who they are and what level of access to Amazon services they should have.

When working with AWS API the clients (programs, scripts, etc) must have a way to prove who they are and what level of access to Amazon services they should have.

In a typical scenario an AWS user runs aws cli (or a script using aws cli) to interact with Amazon. For example to create a VPC and Subnets or to backup some files to S3 bucket. To perform any non-anonymous operation like these the script will need a set of AWS Credentials – also known as Access Key and Secret Key.

There is a couple of ways to obtain the credentials.

Here we will discuss only the first way of obtaining AWS Credentials and will talk about the other methods separate posts.

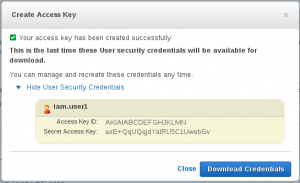

Static IAM User keys

These are the keys you get from IAM→Users→username in the Web Console.

They are great for playing with AWS from the command line – you can call the API to describe or even create, modify, and delete AWS resources. Provided your IAM User permissions allow such access of course. Using these keys is usually the first step in your cloud automation journey.

Trouble is that they are too convenient – if you as a user have some privileges and want to schedule your scripts to run unattended with the same rights the obvious first choice is to store your personal access and secret key on the management machine for the scripts to use. Probably in ~/.aws/config or you may set the environment variables in /etc/crontab or /etc/profile or similar.

export AWS_ACCESS_KEY_ID="AKIAIABCDEFGHJKLMN" export AWS_SECRET_ACCESS_KEY="axE+QqUQojdYalRU5C1UwebGv"

What happens now is that these credentials will stay around forever. And what happens next is that after a few months the owner of these keys leaves the organisation, his IAM User account will be closed and the scripts stop working. I’ve seen that happen over and over again.

Never ever copy your IAM User keys to any production machine. Keep them on your laptop on an encrypted partition and never “productionise” them.

Service-specific keys

There may be external services that require AWS Keys for accessing something in your account. A good example may be a log analyser running in your datacentre (i.e. not in AWS) reading CloudTrail logs from a dedicated S3 bucket. Or a backup agent uploading nightly backups from from your colocated server to S3. The best practice in this case is:

- Create a new IAM User with a descriptive name, e.g. log-analyser or backup-<servername>

- Give it the minimum permissions it needs, e.g. read-only or write-only access to that one single S3 bucket and restrict by the folder as well.

- Never use this key for anything else. It’s easy to create a dedicated account for each service. Give each account a descriptive name to know what is the key for.

Note that you won’t need these accounts for services running on AWS EC2 Instances — these should use Instance Role Keys as described my a separate post.

[…] the previous post about Access & Secret Keys I emphasized that those keys – static keys – should never be used in production and in […]

Hi Michael,

I really loved your article on “Mining Ethereum on AWS” such a nice read and very simple to understand. It really gave me a lot of insight into how to get started and also how to navigate the instance types. I am a trainee Solutions Architect so your ETH miner CloudFormation stack was extremely helpful and I am very grateful.

I am still yet to amend for myself to use as still learning and only 4 months into my AWS CA studies but again, thank you!

Really appreciate all the knowledge and information you have put together to help people keen to run Eth Miners with AWS! Great article, KUDOS!!

Ciao

Mike